Feel the ASI

We are approaching a pivotal moment in human history,

and we need your help to navigate it responsibly.

A growing consensus of notable AI researchers, tech leaders, and public officials believe that highly capable AI systems, possibly even superintelligence, could arrive within the next 5-10 years.

It sounds like science fiction, but it's a possibility we all need to take seriously. AI systems are approaching the ability to improve themselves without human help. Once that happens, progress could accelerate beyond our ability to predict or control.

What Leading Voices Are Saying

Scientists, tech leaders, and elected officials across the political spectrum are raising the alarm.

Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war.

average probability that advanced AI leads to outcomes extremely bad for humanity.

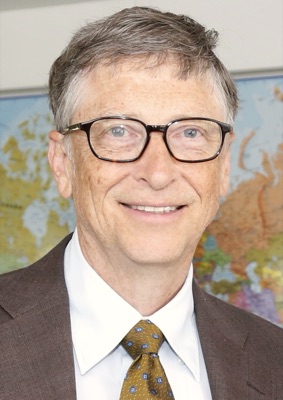

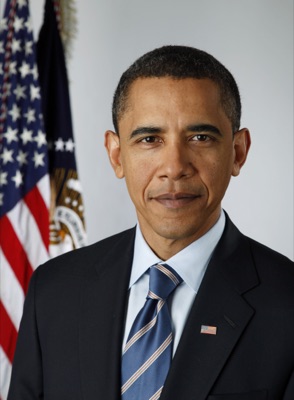

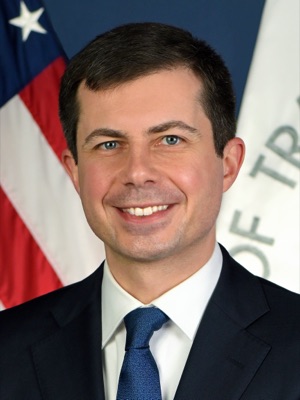

Image credits

Gates — Kuhlmann/MSC, CC BY 3.0 · Hinton — Xuthoria, CC BY-SA 4.0 · Hassabis — John Sears, CC BY-SA 4.0 · Altman — Steve Jurvetson, CC BY 2.0 · Amodei — TechCrunch, CC BY 2.0 · Pichai — Lukasz Kobus, CC BY 4.0 · Musk — Duncan.Hull, CC BY-SA 4.0 · Hawking — Jim Campbell, CC BY 3.0 · Obama, Vance, Buttigieg, Sanders — official portraits, public domain.

Essential Resources

The AI Revolution: The Road to Superintelligence

A classic 2015 exploration of AI, exponential growth, and why superintelligence matters. Still one of the most accessible introductions you can read. Read Part 1 and Part 2.

AI 2027

A well-researched fictional scenario exploring plausible future outcomes in the race to AI supremacy. See also the AI Futures Blog for ongoing updates, forecast tracking, and policy analysis from the same team.

International AI Safety Report

The most authoritative global scientific assessment of AI capabilities and risks. Includes an accessible 3-page executive summary for non-experts.

The Problem

A clear explanation of the core challenges in AI alignment and why they matter.

Situational Awareness: The Decade Ahead

A comprehensive analysis of the path from GPT-4 to AGI to superintelligence, including national security implications.

If Anyone Builds It, Everyone Dies

A New York Times bestseller making the case that building superhuman AI with current techniques poses an existential threat to humanity, and why the situation is still preventable.

Hank Green on AI Risk

An accessible, mainstream introduction to the AI safety case from one of YouTube's most trusted science communicators.

Should We Pause AI? Here's the Debate.

If superintelligent AI could cause human extinction, why don't we simply stop building it? A clear, animated overview of the main arguments, practical difficulties, and proposed responses.

AI Is A Massive Problem. Here's Why.

A thorough walkthrough of how modern AI actually works, why it's advancing so fast, and what the risks are. Well-produced and accessible even if you have no technical background.

Taking AI Doom Seriously For 62 Minutes

A thorough, well-produced deep dive into AI existential risk.

AI Explained

Stay updated on major AI developments with balanced, sensible analysis from the creator of SimpleBench.

AI in Context

Thoughtful analysis and commentary on AI developments, exploring both the technical and societal implications.

CivAI: Explore AI Capabilities

Interactive demos that let you experience what AI can actually do, from creating deepfakes in seconds to generating personalized phishing attacks, so you can understand the capabilities and risks firsthand.

BlueDot Impact Courses

Free courses on AI safety and governance designed to sharpen your thinking on what actually matters. No technical background required for the governance track.

Epoch AI

The gold standard for empirical AI trend data. Interactive dashboards tracking compute growth, model capabilities, and hardware trends. Hard numbers instead of hype or speculation.

AISafety.com

A curated portal for the AI safety ecosystem: field map of organizations, job boards, study courses, community links, events calendar, and funding directories. The best starting point if you want to explore what's out there.

One Useful Thing

A Wharton professor's hands-on exploration of what AI can actually do today. Concrete demonstrations and clear-eyed analysis that help you grasp the real capabilities and limitations of current systems.

AI Futures Project Blog

Ongoing analysis and forecasting updates from the team behind AI 2027. Follow along as they track how reality compares to their scenario, grade their predictions, and explore what comes next.

The Power Law

Grounded analysis of AI, national security, and emerging technology from one of the top forecasters in the field. Especially strong on US–China dynamics, AI policy, and where things are actually heading.

Target Curve

A blog exploring why automating intelligence is fundamentally different from previous technological revolutions, and what it means for society. Covers the evidence, addresses common objections, and focuses on what we can actually do about it.

Planned Obsolescence

Practical writing on AI preparedness from a leading safety researcher, focused on concrete milestones, timelines, and what we should actually expect.

Take Action

You now know what's at stake. Here's how to make a difference.

Make a Microcommitment

One small action every week — delivered to you. Share an article, sign a petition, email a representative. Never wonder what to do next. Just 5 minutes a week.

Add Your Name

Sign open letters calling for international AI safety standards. Takes seconds, and every signature strengthens the case for regulation.

Contact Your Representatives

Tell your elected officials you want real AI oversight. ControlAI provides pre-written templates. Just fill in your details and send.

Join a Community

Connect with others who take this seriously. Organize local events, coordinate advocacy campaigns, or contribute to grassroots efforts pushing for a pause on frontier AI development.

Learn AI Safety

Take free courses on the technical and governance challenges of advanced AI. BlueDot Impact runs cohort-based programs that have trained thousands of people now working in AI safety.

Work on AI Safety

If you want to dedicate your career to AI safety, 80,000 Hours offers free one-on-one advising to help you find high-impact roles in AI safety policy, research, and governance.

Never doubt that a small group of thoughtful, committed citizens can change the world; indeed, it's the only thing that ever has.